Anonymization of sensitive data by the combined approach of NLP and neural models

10/01/2023 , written by Sanoussi Alassan

Jan 10 , 2023 read

Data exploitation is more than ever a major issue within any type of organization. Several use cases are covered, from exploration to extraction of relevant and usable information, in order to :

- Understand the environment of an organization

- Better understand its employees

- Improve its services, products and processes (use case of production data in a test and/or development environment)

Handling this mass of information is not without consequences. It contains sensitive information whose disclosure may harm legal entities and/or individuals. This is why the European Parliament adopted in May 2016, the General Data Protection Regulation (GDPR) aiming to frame the processing of data in an equal way throughout the European Union. Its objectives: to strengthen the rights of individuals, to make actors processing data more accountable and to promote cooperation between data protection authorities. Pseudonymization/anonymization thus appears to be an indispensable technique for protecting personal data and promoting compliance with regulations.

What is Pseudonymization and Anonymization?

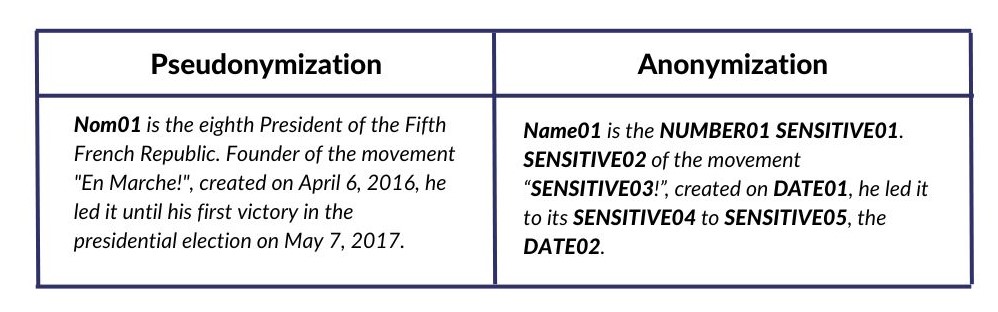

ENISA [1] (the European Union’s cybersecurity agency) defines pseudonymization as a de-identification process. It is the processing of sensitive data in such a way that a natural person can no longer be directly identified without additional information. Whereas anonymization is a process by which personal data are irreversibly altered in such a way that the data subject can no longer be identified, directly or indirectly, either by the controller alone or in collaboration with other third parties [1].

When considering the following text: “Emmanuel MACRON is the eighth President of the Fifth French Republic. Founder of the “En Marche!” movement, created on April 6, 2016, he led it until his first victory in the presidential election on May 7, 2017.”

There are three types of information:

- the named entities: Emmanuel MACRON, April 6, 2016, May 7, 2017, En Marche, eighth

- the mentions: President of the French Fifth Republic, Founder

- Other identifying morphemes: first victory, the presidential election

The following table summarizes the expected result when applying these two techniques

A third category of approach for processing sensitive data is emerging with the advances of neural algorithms on natural language exploitation: advanced pseudonymization. The latter is capable of processing the vast majority of sensitive “identifying” information in a text. However, there are still cases at the margin that can be detected if the context of the subject is known. This is the example of the following text “LinkedIn is a social network. In France, in 2022, LinkedIn has more than 25 million members and 12 million estimated monthly active members, making it the 6th largest social network” where the term 6th largest social network, difficult to detect, can identify LinkedIn when doing some research on the Internet.

What is “sensitive data”?

Sensitive data is information that can identify a natural or legal person. This is the case of the following information when associated with a physical person: full name (surname and first name), location, organization, date of birth, addresses (email, housing), identifying numbers (credit card, social security, telephone) …. or information related to a legal person such as the name of the company, its address, its SIREN and SIRET identifiers, ….

How to pseudonymize data?

The CNIL [2] describes two types of pseudonymization techniques: those that rely on the creation of relatively basic pseudonyms (counter, random number generator) and those that rely on cryptographic techniques (secret key encryption, hash function). All of these methods explain how sensitive data should be handled in the context of pseudonymization. They do not explain how to identify it. The identification process can be simple when the data is tabular. In this case, it is sufficient to delete or encrypt the contents of the relevant columns.

At Novelis, we are working on advanced pseudonymization of sensitive data contained in free text. Identification in this context is complex and is often performed manually by humans, which imposes a cost in time and skilled human resources. Artificial intelligence (AI) and automatic language processing (NLP) techniques are however sufficiently robust to automate this task. We will thus generally distinguish two types of approaches for sensitive data extraction: neural approaches and rule-based approaches. Although they provide excellent results, especially with the emergence of Transformers (deep learning model), neural approaches require large datasets to be relevant, which is not always the case in the industrial world. They also require an annotation task by experts in order to provide the models with a quality dataset for training. As for rule-based models, they suffer from generalization problems. A rule-based model will indeed tend to have a good accuracy on the sample used as a training base but will be more difficult to apply to a new dataset not studied in the initial assumptions

The approach proposed by the Novelis R&D team

We propose a hybrid approach exploiting the strengths of NLP techniques and neural models. First, we built a corpus containing addresses, to train a neural model able to detect an address in a text. A benchmarking of the models was performed in order to choose the adequate model. The model is then improved using a fine-tuning strategy. Combined with NLP python libraries, the model provides a robust solution for extracting addresses and named entities such as people’s names, places and organizations. Patterns (regular expressions) were designed, by Novelis experts, for the extraction of other identified sensitive data. Finally, heuristics were used to disambiguate and correct the extracted information.

With this approach, we have built a reliable and robust system to process sensitive information contained in any type of document (pdf, word, email, …). The goal is to remove low value-added tasks from the data processors by automated assistance.

References:

- [1] : https://www.enisa.europa.eu/news/enisa-news/enisa-proposes-best-practices-and-techniques-for-pseudonymisation

- [2] : https://www.cnil.fr/fr/recherche-scientifique-hors-sante/enjeux-avantages-anonymisation-pseudonymisation